When you get a call at 4 a.m. saying there is a critical issue with an application you are responsible for, you get started with analyzing the issue to figure out the root cause. Once you realize the source of the issue, you may wonder why it wasn't caught in the first place.

Despite the saying, "There's no 'I' in 'team,'" you ponder who dropped the ball. That curiosity is the reality of things built by one group and used by another group.

When it comes to software, things get more unwieldy since it is not feasible to do a visible inspection to detect them. It's a strange world where you write more code, ideally, to test the code you wrote in the first place.

This additional code has been the subject of many a debate about what they should be, how they should be written, and more importantly, who should write them.

If you are a well-funded team, be it within an enterprise or a startup, there is a high chance that you bask in the luxury of dedicated people to do this task for each application.

In other cases, either the feature velocity for the application is significantly impacted to accommodate testing, or in worse cases, there is no formal testing in place before an application goes out the door.

Traditional Development

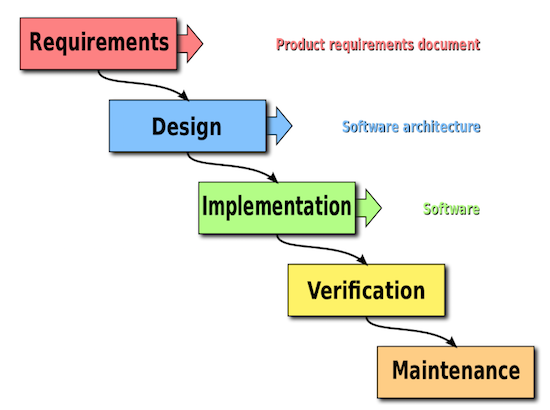

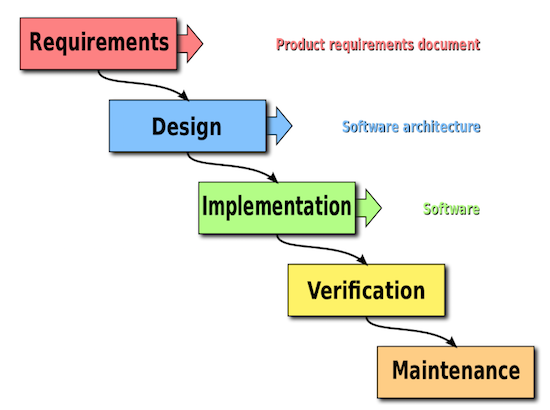

In the early days of software development, processes that worked for manufacturing were used for creating software, too. There was a long process of collecting requirements, designing, writing the code, and testing it. Each phase was exclusive, and unless it reached its culmination with a review and acceptance, the next phase would not begin.

This process is famously dubbed the waterfall model. In this traditional setting, the requirements are thoroughly vetted before a single line of code is written, Then, the development team writes several lines of code, and the QA (Quality Assurance) team tests the application.

In some cases, QA also writes code to automate testing. In the latter case, a QA engineer is upgraded to the role of software engineer since they also need to understand how to write good code.

Figure 1: Waterfall Model for Software Development

Image Source: Wikipedia

Agile Development

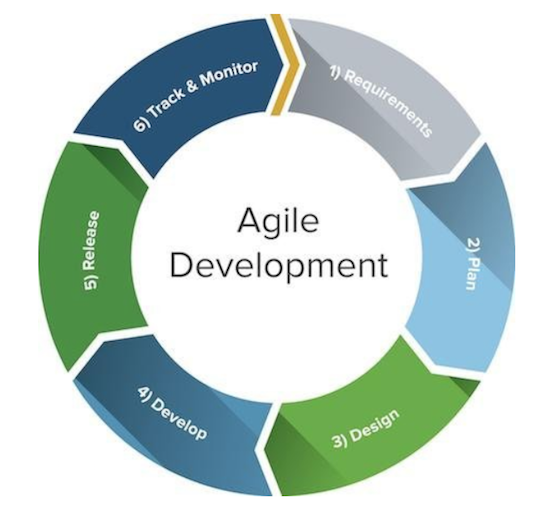

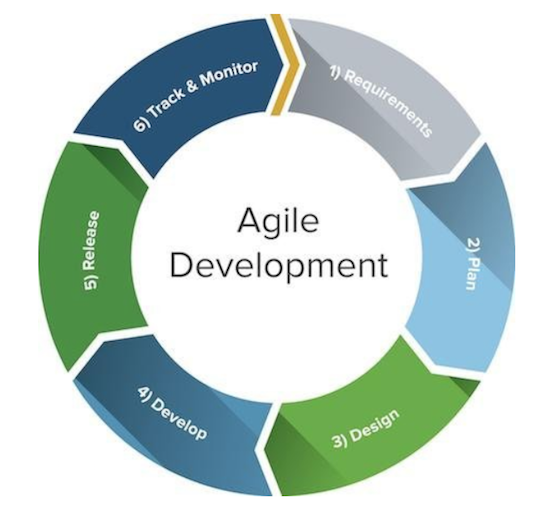

With the rise of software use in practically every field and the need to keep up with the user hunger for more features, the pace of development had to pick up significantly compared to the above waterfall timeline of months and years. Hence, agile methodologies have seen rapid adoption rates in the last few years. Scrum, Kanban, and Extreme Programming are some of the more popular development methods.

While still maintaining the same cycle of requirements from development to testing to deployment of the Waterfall model, agile frameworks shrink them into much smaller timescales to fasten the feedback loop.

Invisible Eddies

In addition to the main feedback loop in agile methods, there are smaller loops that act as cogs in the wheel to achieve agility. Product management, in the process of gathering requirements for the applications, has to find what the users need, come up with a plausible solution, and test it.

While this phase is the bread-and-butter cycle for the application, the same cycle of find-fix-test repeats between different groups.

When the requirements are passed down to the development team, they may find that they are incomplete. Once the development ends and testing begins, assuming the test team has the right mindset of exploring ways to break the application, there is another eddy of missed user actions and edge cases.

Between testing and deployment, if the application is not tested in an environment similar to production, there are additional testing and fixing cycles involved.

Figure 2: Eddies in the Agile Development Process

Image Source: LinkedIn

To some extent, these eddies can be tamed if there exists a good regression test suite. This test suite ensures no existing feature suffers at the stake of new features, and we can also update the tests themselves when the previously existing features are no longer applicable or need to be modified.

Get It out the Door

The logical conclusion of one of the Agile Manifesto Principles, "working software" is the recent hot topic of automating and continuous cycles, continual integration, continual delivery, and continual deployment.

Continual integration is a practice that relies on testing automation to check that the application is not broken when new commits are merged into the main branch of the repository.

Continual delivery is the practice of automating all the steps to be able to deploy at-the-click-of-a-button at any given time. Continual deployment eliminates even that single-click and automates deploying the application.

In the last few years, when startups were booming, releasing a light-weight application followed by constantly rolling out features was seen as being innovative. Lean startup and minimum viable product were seen as the cutting-edge practices of startup creation and software development. Being first to the market was considered more important than first impressions of the users.

In the early days of Facebook, Mark Zuckerberg chanted "Move fast and break things" as the mantra. His mantra meant that the features may not be perfect and fully baked, but the speed at which new features were added to an application was of more value.

Over time, however, he has also said that the mantra did not necessarily make them move faster because they had to slow down to fix bugs. Users, on the other hand, began to notice and negatively impact the product. Maintaining the first in-market position and ensuring a well-built product was a double-edged sword.

Usha Guduri is a dynamic, team-spirited, performance-driven engineering professional with a blend of leadership essence and conceptual excellence.

Over twelve years of experience in software design and development of highly distributed, scalable and performance optimized, low-latency systems with an exceptional aptitude for both front-end and back-end technologies.

Over a six year proven record as a team lead, hiring and managing the best engineering talent across time zones and collaborating with Product, Marketing and QA teams across continents with outstanding results.

Resources:

www.tcgen.com/blog/waterfall-or-agile-do-both

Opinions expressed by the author are not necessarily those of WITI.

Are you interested in boosting your career, personal development, networking, and giving back? If so, WITI is the place for you! Become a WITI Member and receive exclusive access to attend our WITI members-only events, webinars, online coaching circles, find mentorship opportunities (become a mentor; find a mentor), and more!

Founded in 1989, WITI (Women in Technology International) is committed to empowering innovators, inspiring future generations and building inclusive cultures, worldwide. WITI is redefining the way women and men collaborate to drive innovation and business growth and is helping corporate partners create and foster gender inclusive cultures. A leading authority of women in technology and business, WITI has been advocating and recognizing women's contributions in the industry for more than 30 years.

The organization delivers leading edge programs and platforms for individuals and companies -- designed to empower professionals, boost competitiveness and cultivate partnerships, globally. WITI’s ecosystem includes more than a million professionals, 60 networks and 300 partners, worldwide.

WITI's Mission

Empower Innovators.

Inspire Future Generations.

Build Inclusive Cultures.

As Part of That Mission WITI Is Committed to

Building Your Network.

Building Your Brand.

Advancing Your Career.

Comments